I tried 3 AI agents in a real world business lead generation scenario, the results were scary...

By Ryan Ching

Last year I wrote an article arguing that AI agents were still mostly fluff, interesting in demos, but not genuinely useful in day-to-day workplace operations.

Funny how quickly that aged.

Within six months, several new autonomous agents have launched that are now credible candidates for real business use. So we tested them on an actual workflow to see what holds up once you move past marketing copy and into execution.

For those in a rush, here is the short version:

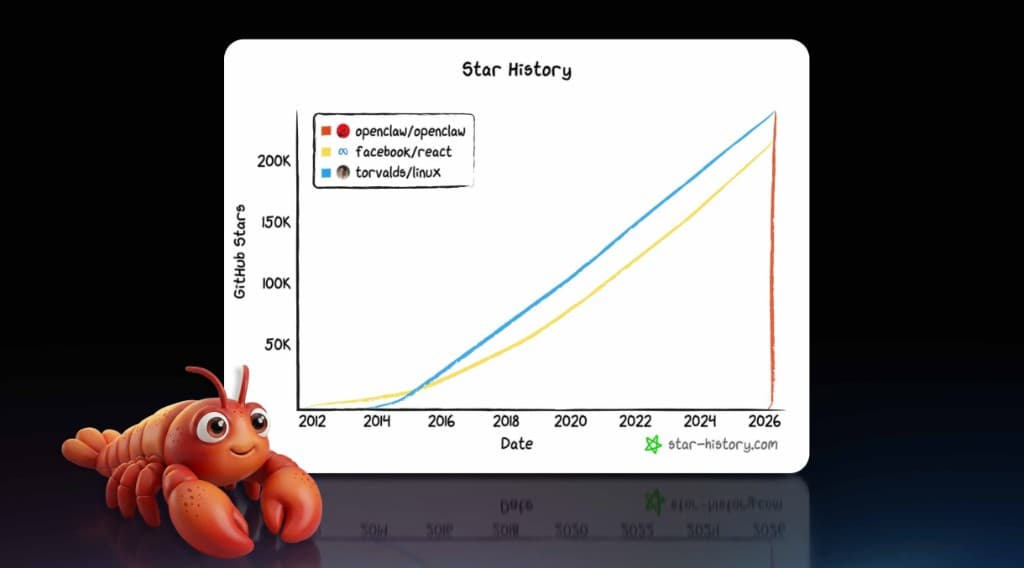

- OpenClaw: No wonder this is the fastest-growing open-source product on GitHub right now, by a massive margin. Setup takes work, but once configured it is extremely fast, highly controllable, and genuinely useful. This category is likely to change how both individuals and teams operate.

- Perplexity Computer: Thoughtful product design, easy to use, and particularly strong when the task has a heavy research component. Main drawback is cost, in its current format.

- Claude Co-work: The surprise disappointment in this test. Slow, cumbersome, and unreliable on basic procedural tasks. Given Anthropic’s broader product quality, this feels like an early version that still needs time.

Why We Ran This Test

Most agent reviews are still either benchmark theatre or product fan-fiction. We wanted something practical.

The test task was simple to describe, but operationally demanding:

- Work through a .csv list of leads (we chose the self-storage industry)

- Visit each company website

- Verify whether online availability or booking exists

- Prioritise targets based on that signal

- Find relevant contacts and usable emails

- Prepare outreach-ready outputs

In short, this was a real-world, multi-step business workflow, not a one-shot prompt.

Evaluation Criteria

We scored each product on what actually matters in operations:

- Speed of execution

- Instruction retention across multiple steps

- Consistency and error rate

- Ability to complete follow-up actions

- Practical usability in a live workflow

A tool can sound smart and still be useless in execution. This test focused on execution.

3) Claude Co-work

I knew I was in trouble with Co-work when it could not find the file I was referring to, even though it had access to my Google Drive.

No problem, I dropped the Google Sheet link directly into chat. It still struggled to access the file and suggested I export it to CSV and upload it manually instead. Sorry mate, I did not realise I was the butler in this arrangement.

Once the list finally loaded, things did not improve. It had major issues browsing the web for supporting information, which is something standard Claude usually handles without much fuss.

After roughly ten rows and about an hour gone, I called it. The workflow was too slow and too brittle to keep using.

2) Perplexity Computer

Perplexity Computer was the opposite experience after Co-work.

It was easy to start, intuitive to operate, and strong where research depth matters. It handled information retrieval well, synthesised clearly, and moved from insight to action more smoothly than many tools in this category.

If your workflow has a major research component and then light execution steps, this is a strong option.

The obvious friction point is pricing. At current cost, value depends heavily on usage volume and task type.

1) OpenClaw

OpenClaw had the highest setup friction by far.

This is not a plug-and-play product. I spent about an hour on setup content, then several more hours configuring it properly, ironically with help from Claude during setup.

But once setup is done, the performance difference is significant.

For this workflow, OpenClaw was the fastest and easiest to operate, with better control, better process consistency, and less babysitting required. It handled structured, repeatable work in a way that felt operationally useful, not just impressive in a demo.

The tradeoff is clear. Higher upfront setup cost, lower ongoing operational friction.

Final Ranking

- OpenClaw

- Perplexity Computer

- Claude Co-work

This ranking is based on one practical workflow, lead research plus contact enrichment plus outreach preparation. Different tasks may produce different results, but for real operational execution this was the order.

What This Means for the Workplace

Right now, autonomous agents feel like portable CD players when they first came out. You accepted the occasional skip whenever you bumped the device, because even with that flaw, you could see the direction of travel.

This moment feels similar.

No one can say with certainty what OpenClaw, or this category more broadly, will look like in six months. But the workplace implications are already significant. A conservative estimate is that giving each employee a competent AI agent could lift productivity by around 0.25 FTE per person at relatively low cost. If that holds at scale, the economic impact is enormous.

What happens next is the key point. Once the industry develops the equivalent of the old Discman anti-skip function, meaning better reliability, better memory, and fewer execution misses, this shifts from “promising” to standard operating infrastructure.

That is both exciting and unsettling. It should be.

Ready to use the 3peat AI Framework Builder?

Use the 3peat AI Framework Builder to list your AI systems, classify risk, and generate a practical governance framework your team can implement immediately.

3peat AI Framework Builder